AKS Monolith Setup

This guide walks you through deploying ThingsBoard CE in monolith mode on Azure Kubernetes Service (AKS). We use Azure Database for PostgreSQL as the managed database.

Prerequisites

Section titled “Prerequisites”Install and configure tools

Section titled “Install and configure tools”Install kubectl and az CLI tools.

Log in to Azure:

az loginStep 1. Clone ThingsBoard CE K8S scripts repository

Section titled “Step 1. Clone ThingsBoard CE K8S scripts repository”git clone -b release-4.3 https://github.com/thingsboard/thingsboard-ce-k8s.gitcd thingsboard-ce-k8s/azure/monolithStep 2. Define environment variables

Section titled “Step 2. Define environment variables”export AKS_RESOURCE_GROUP=ThingsBoardResourcesexport AKS_LOCATION=eastusexport AKS_GATEWAY=tb-gatewayexport TB_CLUSTER_NAME=tb-clusterexport TB_DATABASE_NAME=tb-dbexport TB_REDIS_NAME=tb-redisecho "Resource group: $AKS_RESOURCE_GROUP, location: $AKS_LOCATION, cluster: $TB_CLUSTER_NAME, database: $TB_DATABASE_NAME"| Variable | Default | Description |

|---|---|---|

AKS_RESOURCE_GROUP | ThingsBoardResources | Azure Resource Group name |

AKS_LOCATION | eastus | Azure region. Run az account list-locations for options |

AKS_GATEWAY | tb-gateway | Azure Application Gateway name |

TB_CLUSTER_NAME | tb-cluster | AKS cluster name |

TB_DATABASE_NAME | tb-db | PostgreSQL server name |

TB_REDIS_NAME | tb-redis | Valkey/Redis cache name |

Step 3. Configure and create AKS cluster

Section titled “Step 3. Configure and create AKS cluster”Create the Azure Resource Group:

az group create --name $AKS_RESOURCE_GROUP --location $AKS_LOCATIONSee az group for more info.

Create the AKS cluster with 1 node:

az aks create --resource-group $AKS_RESOURCE_GROUP \ --name $TB_CLUSTER_NAME \ --generate-ssh-keys \ --enable-addons ingress-appgw \ --appgw-name $AKS_GATEWAY \ --appgw-subnet-cidr "10.2.0.0/16" \ --node-vm-size Standard_DS3_v2 \ --node-count 1Key parameters:

- node-count — number of nodes per pool (default: 3)

- node-vm-size — VM size (default:

Standard_DS2_v2) - enable-addons — enables Application Gateway as a path-based load balancer

- generate-ssh-keys — generates SSH keys if missing (stored in

~/.ssh)

See az aks create for the full parameter list. Alternatively, follow the portal-based cluster setup guide.

Step 4. Update the context of kubectl

Section titled “Step 4. Update the context of kubectl”az aks get-credentials --resource-group $AKS_RESOURCE_GROUP --name $TB_CLUSTER_NAMEVerify the connection:

kubectl get nodesStep 5. Provision databases

Section titled “Step 5. Provision databases”5.1 Azure Database for PostgreSQL

Section titled “5.1 Azure Database for PostgreSQL”You need to set up PostgreSQL on Azure. ThingsBoard uses it as the main database.

You may follow the Azure portal guide, keeping these requirements in mind:

- PostgreSQL version 16.x

- The instance must be accessible from the AKS cluster

- Use

thingsboardas the initial database name - High availability enabled is recommended for production

Alternatively, create using the CLI (replace POSTGRES_USER and POSTGRES_PASS with your credentials):

az postgres flexible-server create --location $AKS_LOCATION --resource-group $AKS_RESOURCE_GROUP \ --name $TB_DATABASE_NAME --admin-user POSTGRES_USER --admin-password POSTGRES_PASS \ --public-access 0.0.0.0 --storage-size 32 \ --version 16 -d thingsboardNote the host value from the command output (e.g. tb-db.postgres.database.azure.com). Also note the username and password.

Edit tb-node-db-configmap.yml and replace YOUR_AZURE_POSTGRES_ENDPOINT_URL, YOUR_AZURE_POSTGRES_USER, and YOUR_AZURE_POSTGRES_PASSWORD with the correct values:

nano tb-node-db-configmap.yml5.2 Cassandra (optional)

Section titled “5.2 Cassandra (optional)”Using Cassandra is optional. We recommend it if you plan to insert more than 5K data points per second or want to optimize storage space.

Provision additional node pools

Section titled “Provision additional node pools”Create 3 separate node pools with 1 node per zone. At least 4 vCPUs and 16 GB of RAM is recommended.

az aks nodepool add --resource-group $AKS_RESOURCE_GROUP --cluster-name $TB_CLUSTER_NAME --name tbcassandra1 --node-count 1 --zones 1 --labels role=cassandraaz aks nodepool add --resource-group $AKS_RESOURCE_GROUP --cluster-name $TB_CLUSTER_NAME --name tbcassandra2 --node-count 1 --zones 2 --labels role=cassandraaz aks nodepool add --resource-group $AKS_RESOURCE_GROUP --cluster-name $TB_CLUSTER_NAME --name tbcassandra3 --node-count 1 --zones 3 --labels role=cassandraDeploy Cassandra stateful set

Section titled “Deploy Cassandra stateful set”kubectl apply -f tb-namespace.ymlkubectl config set-context $(kubectl config current-context) --namespace=thingsboardkubectl apply -f receipts/cassandra.ymlUpdate DB settings

Section titled “Update DB settings”echo " DATABASE_TS_TYPE: cassandra" >> tb-node-db-configmap.ymlecho " CASSANDRA_URL: cassandra:9042" >> tb-node-db-configmap.ymlecho " CASSANDRA_LOCAL_DATACENTER: dc1" >> tb-node-db-configmap.ymlCreate keyspace

Section titled “Create keyspace”kubectl exec -it cassandra-0 -- bash -c "cqlsh -e \ \"CREATE KEYSPACE IF NOT EXISTS thingsboard \ WITH replication = { \ 'class' : 'NetworkTopologyStrategy', \ 'dc1' : '3' \ };\""Step 6. Installation

Section titled “Step 6. Installation”Run the initial database setup:

./k8s-install-tb.sh --loadDemoWhere --loadDemo is an optional argument to load additional demo data.

After this command finishes you should see:

Installation finished successfully!Step 7. Starting

Section titled “Step 7. Starting”./k8s-deploy-resources.shAfter a few minutes, call kubectl get pods. If everything went fine, you should see tb-node-0 pod in the READY state.

Step 8. Configure load balancers

Section titled “Step 8. Configure load balancers”8.1 Configure HTTP(S) load balancer

Section titled “8.1 Configure HTTP(S) load balancer”You have 2 options:

- HTTP — recommended for development. Simple configuration and minimum costs.

- HTTPS — recommended for production. Requires an SSL certificate uploaded to Application Gateway.

HTTP load balancer

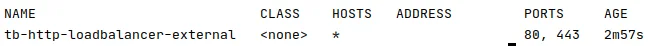

Section titled “HTTP load balancer”kubectl apply -f receipts/http-load-balancer.ymlCheck the status:

kubectl get ingressOnce provisioned, you should see output similar to:

NAME CLASS HOSTS ADDRESS PORTS AGEtb-http-loadbalancer <none> * 34.111.24.134 80 7m25sDefault credentials:

- System Administrator: sysadmin@thingsboard.org / sysadmin

- Tenant Administrator: tenant@thingsboard.org / tenant (if demo data loaded)

- Customer User: customer@thingsboard.org / customer (if demo data loaded)

HTTPS load balancer

Section titled “HTTPS load balancer”Upload your SSL certificate to Application Gateway:

az network application-gateway ssl-cert create \ --resource-group $(az aks show --name $TB_CLUSTER_NAME --resource-group $AKS_RESOURCE_GROUP --query nodeResourceGroup | tr -d '"') \ --gateway-name $AKS_GATEWAY \ --name ThingsBoardHTTPCert \ --cert-file YOUR_CERT \ --cert-password YOUR_CERT_PASSDeploy the HTTPS load balancer:

kubectl apply -f receipts/https-load-balancer.ymlCheck the status:

kubectl get ingress8.2 Configure MQTT load balancer (optional)

Section titled “8.2 Configure MQTT load balancer (optional)”kubectl apply -f receipts/mqtt-load-balancer.ymlThe load balancer forwards all TCP traffic for ports 1883 and 8883.

For MQTT over SSL, follow the MQTT over SSL guide to configure the required environment variables in tb-node.yml.

8.3 Configure UDP load balancer (optional)

Section titled “8.3 Configure UDP load balancer (optional)”kubectl apply -f receipts/udp-load-balancer.ymlThe load balancer forwards all UDP traffic for ports:

| Port | Protocol |

|---|---|

| 5683 | CoAP non-secure |

| 5684 | CoAP secure DTLS |

| 5685 | LwM2M non-secure |

| 5686 | LwM2M secure DTLS |

| 5687 | LwM2M bootstrap DTLS |

| 5688 | LwM2M bootstrap secure DTLS |

For CoAP over DTLS, follow the CoAP over DTLS guide. For LwM2M over DTLS, follow the LwM2M over DTLS guide.

8.4 Configure Edge load balancer (optional)

Section titled “8.4 Configure Edge load balancer (optional)”kubectl apply -f receipts/edge-load-balancer.ymlThe load balancer forwards all TCP traffic on port 7070.

Step 9. Validate the setup

Section titled “Step 9. Validate the setup”kubectl get ingresskubectl get serviceTwo load balancers are available:

tb-mqtt-loadbalancer— for TCP (MQTT) protocoltb-udp-loadbalancer— for UDP (CoAP/LwM2M) protocol

Troubleshooting

Section titled “Troubleshooting”kubectl logs -f tb-node-0See the kubectl Cheat Sheet for more details.

Upgrading

Section titled “Upgrading”./k8s-delete-resources.sh./k8s-upgrade-tb.sh --fromVersion=[FROM_VERSION]./k8s-deploy-resources.shWhere FROM_VERSION is the starting version. See Upgrade Instructions for valid values. Upgrade versions one by one.

Cluster deletion

Section titled “Cluster deletion”Delete ThingsBoard pods and load balancers:

./k8s-delete-resources.shDelete all data including database:

./k8s-delete-all.shDelete the AKS cluster:

az aks delete --resource-group $AKS_RESOURCE_GROUP --name $TB_CLUSTER_NAME